Completion Decision Tree in Multi-Class Conditions using Excel

Assalamualaikum Wr. Wb, greetings and greetings of culture

Basically the Decision Tree algorithm is divided into several parts, namely the CART Algorithm, ID3, C4.5, C5.0, Random Forest, and Gradient Boosting. From some of these algorithms, the basis of the calculation remains the same, namely looking for the Entropy value as an initial stage, but for the next step there will be different stages according to each criterion in the algorithm.

In the example, the ID3 calculation will be completed at the stage of calculating the information gain. Unlike the C4.5 algorithm which can calculate to the gain ratio stage, even the C4.5 algorithm can also calculate only with information gain. And can also calculate with a Gini index that is different from the gain ratio.

Also Read: Solving The C4.5 Algorithm with Excel in The Case of Mixed Data

Well, in this article I will write down several ways to calculate the initial stages of the decision tree algorithm, namely calculating the Entropy value in multiclass cases or conditions. What is meant by multiclass conditions is that there is an output class consisting of more than two classes. But before that, let's first look at the basic formula for entropy.

Entropy Formula

The basic formula for Entropy that we often see is like this

S = Case Set

n = Number of partitions S

p_i = probability obtained from the number of classes divided by the total cases

from the formula we can see there is log2 . In this case the logarithm that counts is only 2 different cases. Or in other sentences, the formula can only be used if the output class or class label contains only two categories.

Indeed, some of the references that we often see, whether they be in the form of books, journals, papers, or websites, will certainly provide an overview of the formula as above. This indeed refers to the criteria for the data set used by the supervised learning or binary classification or classification with a minimum of a class 2 category label.

Also Read: Calculating Naive Bayes with Excel Numeric Data Attributes

Then can the formula be used for conditions of more than 2 classes?

If we use a formula like that then the answer can't be. Because if we have a data set with multi-class conditions, the resulting Entropy value is more than 1. Where in the book entitled "Machine Learning Basic and Advanced" by Suyanto in 2018. It is said that the Entropy value interval is between 0 - 0.5 up to 1.

Well, as in my personal experience when I have a data set with a label/output class of 3 categories and I try to use the entorpy formula above the result is more than 1.

So what's the solution?

Logarithm

Let's talk about logarithms first. A logarithm is a mathematical operation which is the inverse (inverse) of exponentiation (power). That is, logarithm is an exponential search operation so that a certain base raised to the power of this exponent results in an entered value. (Source: Wikipedia).

If we focus on the underlined word, it can be interpreted briefly as a search for a certain value according to the value raised to the power. 😁 (Correct if wrong)

So it means that in the log formula we can enter according to the number of powers.

Example 2 to the power of 3 which is calculated as 2x2x2= 8 then the writing is 2log8 = 3

Implementation

In the entropy formula above, we see that n is the number of partitions S where S is the number of data sets and partition S is the number of outputs/labels of S. This means that n is the number of classes.

Then why write log2 ? Well, it was explained earlier that 2 is the minimum limit of the characteristics of the data set in the supervised learning classification,

This means that if we have a data set with more than 2 classes or in my case that has 3 classes, the log used is log3.

How to Calculate Entropy in Excel Multi Class Conditions

Okay, after we understand the various information above, the next is how to calculate logs using excel

Basic Concepts of Calculating Logs (logarithms) in excel

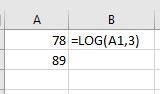

=LOG(number,[based])

number = value to be calculated

based = anti-algorithm / in the form of positive numbers.

Beside is how to calculate log3 of the values located in column A1.

Beside is how to calculate log3 of the values located in column A1.

Calculating Entropy in excel

If the basic entropy formula uses log2 in the Microsoft Excel application we can write IMLOG2 but if we find cases of more than 2 classes or have attribute values of 3 classes we must use the log3 formula as below.

Sum is the total of the sum of the data set or data set.

A, B, and C are the number of class attributes.

The Excel formula is

=((-X4/V4)*LOG((X4/V4),3)+(-Y4/V4)*LOG((Y4/V4),3)+(-Z4/V4)*LOG((Z4/V4),3))

V4 = adalah jumlah data

X4, Y4, dan Z4 = jumlah dari masing-masing kelas/label/output.

Conclusion

The conclusion we can draw is that the log2 that we often encounter in the entropy run is the large number of class/label attributes. If we have 3 class attributes then we must use log3 if we have 4 class attributes then use log4 and so on.

That's a little educational experience that I can give regarding how to calculate the Entropy value in the C4.5 algorithm using excel. Good luck and I hope this post is useful.

Thank you, I hope this information can be useful, if you are willing to help donate for the development of the blog that I built through this link https://paypal.me/muizkhal

Hopefully useful and you can find what you are looking for "Don't Forget to Breathe and Stay Happy in the Gratitude Link".

Wassalamualaikum Wr. Wb. See you again

.png)

Post a Comment for "Completion Decision Tree in Multi-Class Conditions using Excel"

SILAHKAN TANYA DAN DISKUSI DENGAN BIJAK